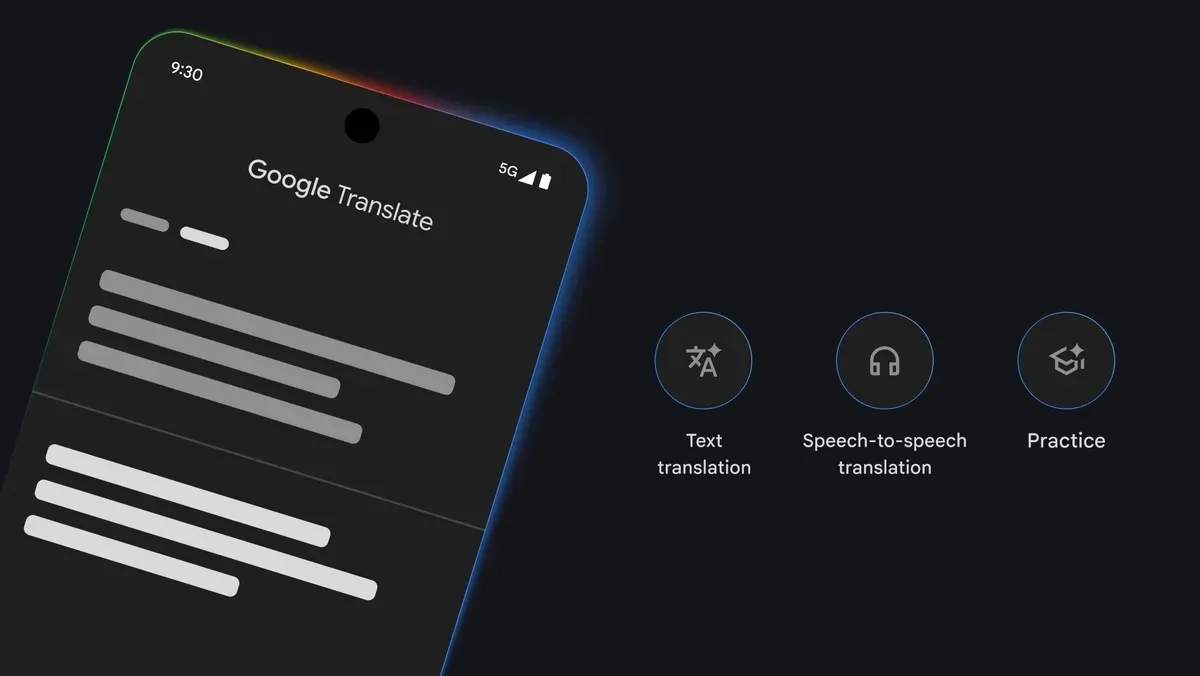

Google has significantly expanded the reach of its live audio translation capabilities, bringing the highly anticipated feature to iPhones through a pivotal update to its Google Translate application. This strategic move effectively transforms any pair of connected headphones into a real-time interpreter, promising to dissolve language barriers for millions of iOS users globally. The announcement marks a substantial step forward in accessible communication technology, democratizing a feature that has long been a staple of science fiction and a complex challenge for engineers.

In a recent blog post detailing the rollout, Sasha Kapur, Google Translate product manager, highlighted the immediate and profound implications for global travel and interpersonal connection. "Live translate is a game-changer for getting recommendations, listening to train announcements and connecting with fellow travelers," Kapur stated, underscoring the practical utility of instantaneous, spoken language conversion in diverse environments. This update is poised to redefine how iPhone users navigate foreign countries, engage with international communities, and conduct cross-cultural exchanges, offering an unprecedented level of linguistic fluidity.

The Evolution of Real-Time Translation Technology

The dream of a universal translator, famously conceptualized in works like Star Trek and the "Babel fish" from The Hitchhiker’s Guide to the Galaxy, has long captivated the human imagination. For decades, the reality was far more limited, relying on dictionaries, phrasebooks, and the laborious process of human translation. The advent of digital technology began to change this landscape, first with text-based translation services, then with rudimentary speech-to-text and text-to-speech functionalities.

Google Translate, initially launched in 2006, revolutionized machine translation by leveraging statistical machine translation (SMT) techniques. Its early iterations allowed users to translate blocks of text between various languages, a significant improvement over previous methods. Over the years, Google steadily enhanced its offering, introducing features like camera translation (translating text in images) and a conversational mode that enabled two individuals to speak into a device and have their words translated and spoken aloud.

A major leap occurred in 2016 with the adoption of Neural Machine Translation (NMT), a deep learning approach that translates entire sentences at once, considering broader context rather than individual words. This dramatically improved the fluency, accuracy, and naturalness of translations. Concurrently, advancements in automatic speech recognition (ASR) and text-to-speech (TTS) synthesis, powered by massive datasets and sophisticated AI algorithms, laid the groundwork for truly "live" audio translation. Google’s Pixel Buds, introduced in 2017, offered an early glimpse into wearable, real-time translation, albeit tied to specific hardware. This latest iPhone update represents the culmination of these advancements, bringing a refined and more accessible version of this technology to a broader audience.

How the Technology Works: A Seamless Linguistic Bridge

The underlying mechanism of Google’s live audio translation is a sophisticated interplay of artificial intelligence components. When a user speaks into their headphones, the audio signal is captured and immediately processed by an automatic speech recognition (ASR) engine. This engine converts the spoken words into text, filtering out background noise and attempting to decipher accents and speech patterns.

Once converted to text, the input is fed into Google’s advanced Neural Machine Translation (NMT) engine. This engine, trained on billions of parallel sentences across numerous languages, analyzes the semantic and syntactic structure of the input phrase and generates a translated version in the target language. Unlike older systems that translated word-for-word, NMT considers the entire sentence, resulting in more contextually appropriate and grammatically correct output.

Finally, the translated text is passed to a text-to-speech (TTS) synthesizer, which generates natural-sounding audio in the desired language, delivered directly to the listener’s headphones. This entire process, from speech input to audio output, occurs in near real-time, often within milliseconds, creating the illusion of a seamless, instantaneous conversation. While much of the heavy computational lifting for NMT and ASR often occurs in Google’s cloud infrastructure, continuous improvements in on-device AI processing and efficient algorithms contribute to minimizing latency and ensuring a smooth user experience, even with varying internet connectivity. The system is designed to intelligently anticipate phrases and learn from user interactions, further refining its accuracy over time.

Chronology of Google Translate’s Live Audio Ambitions

- 2006: Google Translate initially launched as a web-based text translation service.

- 2010: The Google Translate app for Android introduced, featuring basic text input.

- 2011: "Conversation Mode" (later "Two-way automatic speech translation") debuted, allowing users to speak into their phone and have it translate and speak back.

- 2014: "Word Lens" acquisition integrated, bringing instant camera translation (point your phone at text, and it translates on screen).

- 2017: Google Pixel Buds launched with a "real-time translation" feature, demonstrating the potential for wearable translation, though initially tethered to specific Pixel phones.

- 2019-2021: Continued improvements to "Interpreter Mode" within Google Assistant and Google Translate, expanding language support and accuracy for live conversations across various smart devices.

- 2023: Google rolls out live audio translation to the Google Translate app on Android, leveraging the device’s microphone and existing headphones.

- Late 2023/Early 2024: The current update extends this advanced live audio translation capability to iPhones, making it accessible through the Google Translate app with any connected headphones.

Competitive Landscape and Google’s Strategic Advantage

While Google’s latest offering is groundbreaking in its accessibility, it operates within a competitive landscape. Apple, Google’s primary rival in the mobile ecosystem, offers a similar "Live Translation" tool. However, Apple’s feature is notably more restrictive, requiring specific hardware: it necessitates the use of select AirPods models (such as AirPods Pro 2nd generation) paired with an iPhone 15 Pro or later model. This exclusivity limits the feature’s reach to a smaller subset of Apple’s user base, specifically those who have invested in the newest and most premium devices.

Google’s strategy, by contrast, emphasizes broad compatibility. By enabling its live audio translation with "any connected headphones," including older models, standard wired earbuds, or third-party Bluetooth headsets, Google democratizes access to this advanced technology. This inclusive approach significantly expands the potential user base, allowing millions of existing iPhone owners to leverage the feature without needing to upgrade their hardware. This move is a clear competitive differentiator, positioning Google Translate as the more universally accessible solution for real-time communication on iOS.

Other players in the translation technology market include Microsoft Translator, which offers its own suite of translation services across various platforms, and a range of dedicated handheld translator devices from companies like Travis and Waverly Labs. While these specialized devices often boast robust offline capabilities and custom-tuned microphones, they require an additional purchase and another device to carry. Google’s integration into an already ubiquitous smartphone app, coupled with its "any headphones" compatibility, provides a compelling value proposition that simplifies the user experience and reduces barriers to entry. This strategic decision by Google to prioritize accessibility on a rival platform underscores its commitment to making its core AI services widely available, regardless of the underlying hardware ecosystem.

Profound Implications for Travel and Tourism

Sasha Kapur’s emphasis on travel is particularly pertinent, as the global tourism industry continues its robust recovery and growth. According to the United Nations World Tourism Organization (UNWTO), international tourist arrivals reached 84% of pre-pandemic levels in 2023, with projections for full recovery and continued growth in the coming years. As more people venture across borders, the need for seamless communication tools becomes paramount.

The ability to receive real-time audio translations through headphones promises to transform the travel experience in numerous ways:

- Effortless Navigation and Information: Travelers can easily understand train station announcements, airport gate changes, or bus route information, even in languages they don’t speak.

- Enhanced Local Interactions: Asking for directions, ordering food at a restaurant, or seeking recommendations from locals becomes significantly less daunting. This fosters more authentic cultural immersion and reduces the stress associated with language barriers.

- Improved Safety and Security: In emergencies or critical situations, clear communication with local authorities or medical personnel can be life-saving.

- Business Travel: International business travelers can participate more effectively in meetings, understand local customs, and build stronger relationships without the constant need for a human interpreter.

- Hospitality Sector: Hotel staff, tour guides, and retail workers can better serve international clientele, leading to improved customer satisfaction and potentially increased revenue.

This feature moves beyond basic phrasebook lookups, offering a dynamic and interactive experience that allows for natural, spontaneous conversations, ultimately enriching the journey for millions of global explorers.

Broader Societal and Economic Impact

Beyond tourism, the widespread availability of live audio translation holds significant promise for various sectors and societal functions:

- Education: Facilitating international student exchange programs, enabling collaborative projects between students from different linguistic backgrounds, and assisting language learners by providing real-time feedback.

- Business and Commerce: Streamlining international business negotiations, supply chain management, and remote team collaborations. Companies can expand their global reach with greater ease, fostering economic growth and cross-border trade.

- Healthcare: Bridging communication gaps between healthcare providers and patients from diverse linguistic backgrounds, particularly critical in multicultural societies. This can lead to more accurate diagnoses, better treatment adherence, and improved patient outcomes.

- Public Services and Diplomacy: Assisting law enforcement, emergency services, and diplomatic missions in communicating with non-native speakers, ensuring equitable access to justice and essential services.

- Cultural Exchange and Social Integration: Empowering immigrants and refugees to navigate new environments, understand local communities, and integrate more effectively. It can foster deeper cultural understanding and empathy by making direct communication more accessible.

- Accessibility: Providing a vital tool for individuals with hearing impairments who rely on visual cues, or for those who struggle with traditional language learning methods, offering an alternative pathway to communication.

Challenges and Ethical Considerations

While the benefits are immense, live audio translation technology is not without its challenges and ethical considerations:

- Accuracy and Nuance: Despite significant advancements, AI translation can still struggle with idioms, sarcasm, highly technical jargon, specific cultural nuances, and context-dependent meanings. Misinterpretations, while less frequent, can still occur and potentially lead to misunderstandings.

- Privacy Concerns: Live audio processing raises questions about data privacy, especially when conversations contain sensitive personal or proprietary information. Users need assurance that their spoken words are handled securely and not stored indefinitely or used for unintended purposes.

- Internet Dependency: While some basic translation features can work offline, real-time, high-accuracy translation often requires a stable internet connection to leverage cloud-based AI models. This can be a limitation in remote areas or places with poor connectivity.

- Battery Consumption: Continuous audio processing and AI computation can be resource-intensive, potentially leading to increased battery drain on smartphones and connected headphones.

- Impact on Human Translators and Language Learning: There are ongoing discussions about how such advanced tools might impact the profession of human translators and interpreters, as well as the motivation for individuals to learn new languages. While AI can handle routine tasks, human expertise remains invaluable for complex, nuanced, or high-stakes communication.

- Potential for Misuse: Like any powerful technology, real-time translation could potentially be misused, for instance, in surveillance or to circumvent genuine communication barriers in unethical ways.

Industry Reactions and Future Outlook

The tech industry has largely lauded Google’s move, recognizing its strategic importance. Tech analysts are quick to point out that by democratizing access on iOS, Google not only enhances its own ecosystem but also sets a new standard for accessibility in real-time translation. "This is Google leveraging its AI prowess to win on an opponent’s turf," commented a senior analyst at a leading tech research firm, who wished to remain anonymous. "It’s about making their core services indispensable, regardless of your chosen hardware."

Travel industry stakeholders have also expressed enthusiasm. "Any technology that makes travel more seamless and less intimidating is a boon for our sector," said a representative from a major international airline association. "Removing language barriers encourages more people to explore, which benefits everyone."

Looking ahead, the trajectory of real-time translation technology points towards even greater seamlessness and integration. We can anticipate further advancements in AI that improve accuracy, reduce latency, and enable more natural, conversational flow. Future iterations might see deeper integration into smart glasses for overlaid translations, or even more subtle, less noticeable forms of assistance that blend into the fabric of daily life. The long-term vision remains a world where language is no longer a barrier, but merely another facet of human diversity, easily navigable through intelligent technology.

This latest update from Google is more than just a new feature; it is a significant milestone in the journey towards a truly interconnected and multilingual global society, making the dream of universal understanding a more tangible reality for millions of iPhone users worldwide.